Globally, artificial intelligence (AI) is transforming health care. Evolving from early rule-based systems to existing generative models, AI has created new possibilities for how health care is delivered and experienced.1 Primary care, which provides up to 90% of essential health services worldwide,2 represents a natural setting for AI integration. The application of AI in primary care offers potential benefits including enhanced diagnostic accuracy, optimised workflows, and alleviation of workforce shortages by supporting clinicians and enabling self-care at scale. It also brings substantial risks. In this Viewpoint, we explore the potential applications of AI in global primary care, introduce a system-level framework to classify AI tools, examine key challenges, and propose five guardrails for the safe, equitable, and sustainable integration of AI. Although AI in health care is often discussed in abstract terms, the real impact will be realised in everyday encounters between patients and clinicians. We first discuss two plausible near-future scenarios illustrating how AI might shape primary care in contrasting contexts (panel 1).

Search strategy and selection criteria

We reviewed English-language literature and policy documents identified from targeted searches of PubMed and grey literature sources, from March, 2025, to September, 2025, focusing on synonyms for artificial intelligence and primary care. The searches were conducted over multiple days as part of broader internet searches using an evolving set of keywords and terms such as artificial intelligence and primary care, reflecting new concepts and ideas introduced by different coauthors to this Viewpoint.

Panel 1

Two plausible near-future scenarios of artificial intelligence (AI)-augmented primary care

UK general practice

A smoker aged 65 years uses the AI-enabled National Health Service app to report a new, persistent cough with blood-stained phlegm. The integrated chatbot conducts an initial assessment by asking structured follow-up questions and reviewing the individual’s medical record for relevant history and investigations. Based on the assessment, the system schedules a same-day blood test with a health-care assistant, a chest x-ray at the local diagnostic centre, and an urgent consultation with the individual’s usual general practitioner. Before the appointment, the general practitioner receives an AI-generated summary outlining the presenting complaint, flagged risk factors, and suggested differential diagnoses. During the consultation, Ambient AI transcribes the interaction in real time and generates prompts on potential red-flag symptoms, evidence-based guideline recommendations, and appropriate investigation bundles. The general practitioner can decide to engage with or ignore these suggestions. After finalising the management plan, the general practitioner reviews and edits the AI-generated consultation notes before approval. A separate tool then generates a patient-facing summary and hyperlinks to further information, which are sent via SMS following the general practitioner’s review and approval.

Rural Botswana

In a remote village, a boy aged 5 years develops a fever and rash. His mother borrows a neighbour’s smartphone to access an online telemedicine platform. She describes her son’s symptoms in her local dialect using speech-to-text, and the AI system responds with clarifying questions in the same language, also requesting photographs of the rash via the mobile phone’s camera. The AI triage tool generates a preliminary list of differential diagnoses and a risk assessment which is sent to a remote clinician. Supported by AI-driven decision support software, they review the case via video link and advise that the boy needs to be assessed by the local community health worker (CHW). The AI system forwards a structured summary and a link to the relevant diagnostic pathway to the CHW’s mobile device. When the boy is reviewed later that day, the CHW records vital signs in the AI tool, which generates a suggested management plan and tailored safety-netting advice. The boy is automatically added to the schedule for the mobile clinic’s next visit, enabling in-person follow-up, medication management, and non-urgent laboratory testing if required. Appointment details are sent by text to the original mobile phone, and the case is flagged within district surveillance systems for monitoring.

These scenarios highlight several challenges that need to be addressed. Before discussing these, we will review the evolution of AI in health care, along with how frameworks can help to interpret the contemporary landscape.

Evolution of AI in health care

AI in health care has evolved considerably over the past decades, progressing from early rule-based expert systems to modern generative models.3 This evolution reflects advances in computing power, data availability, and algorithmic sophistication. In primary care, characterised by high clinical complexity, messy data, and diverse patient needs,4 AI tools are best understood as aids for specific tasks rather than wholesale replacements for clinicians. These tasks can be broadly categorised as task automation (eg, documentation and coding); pattern identification to find associations and enable predictions (eg, forecasting and risk stratification); and content generation (eg, producing text, images, or sound to inform clinical care). AI deployment in primary care exists as a fragmented collection of tools across these domains.

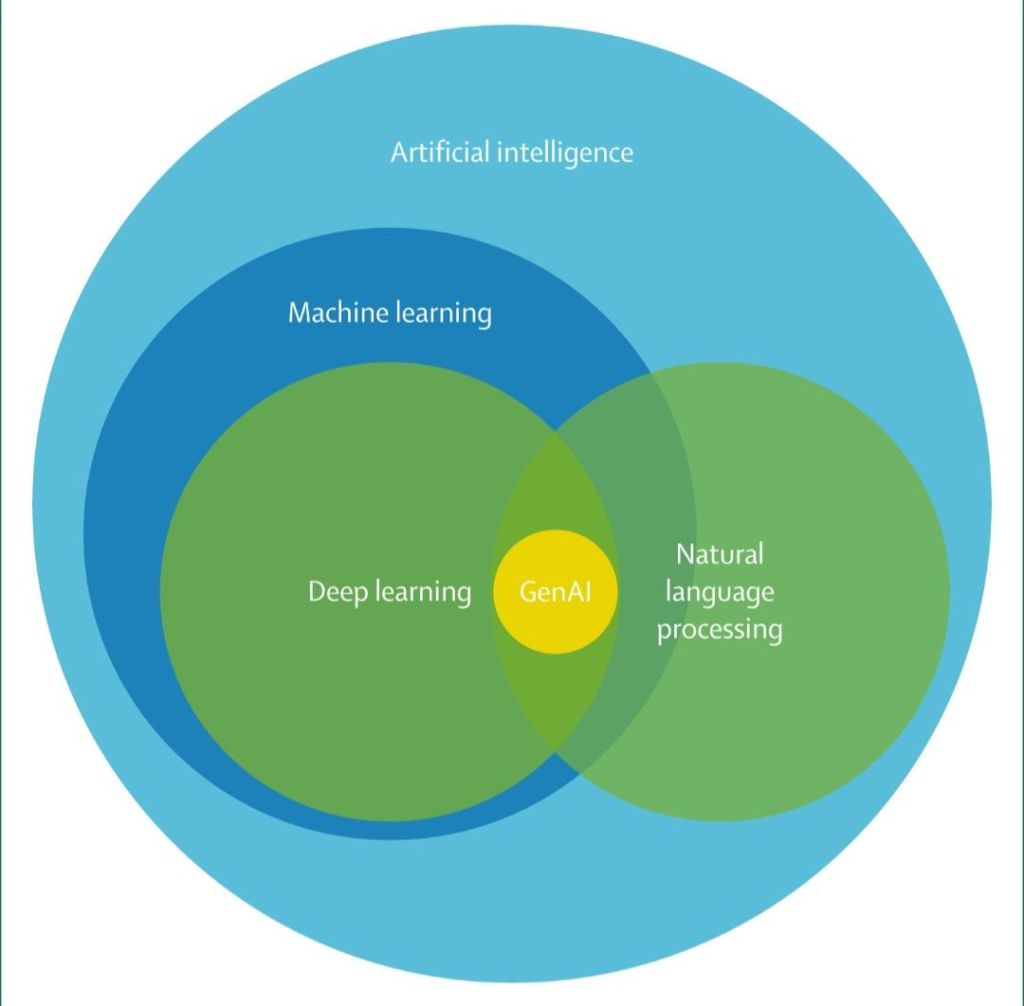

AI applications can be grouped into four overlapping technical categories: rule-based systems, machine learning (including deep learning), natural language processing (NLP), and generative AI (GenAI; figure). Rule-based systems are the earliest form of AI in medicine, dating back to the 1950s and 1960s.5 These systems rely on manually encoded if–then logic derived from clinical guidelines and remain a foundational element of many electronic health records (EHRs). Although rule-based systems lack the ability to adapt and learn, their transparency and reliability make them indispensable for tasks such as generating drug-interaction alerts, vaccination prompts, and routine screening reminders. Rule-based systems continue to form the foundational scaffolding for many more advanced AI approaches.

Machine learning emerged in the 1980s and 1990s and gained momentum in medical research during the 2000s as digital health data became increasingly abundant.6 Machine learning systems use statistical models to detect patterns and make predictions from data. The most common approach, supervised learning, trains models on labelled datasets (eg, predicting the likelihood of a patient being admitted to hospital using variables from primary care EHRs). By contrast, unsupervised learning uses unlabelled data to uncover hidden structures, such as clustering patients with complex multimorbidity. Reinforcement learning follows a different approach, allowing models to learn by trial and error by adjusting their actions based on feedback from the environment. Machine learning tools can be static, trained once and left unchanged, or adaptive, continually updating as new data become available.

Deep learning, a subfield of machine learning that uses multilayered artificial neural networks to model more complex, non-linear relationships in data,7 gained prominence in the 2010s with image and speech recognition. In health care, deep learning powers diagnostic applications such as tools that classify images or analyse heart sounds.8 The strength of deep learning lies in high-dimensional pattern recognition; however, its black box nature makes outputs harder to interpret, as the underlying reasoning cannot be interrogated in the same way as an if–then algorithm. This limitation is particularly important for approaches that push new boundaries in prediction but are not strictly auditable, such as risk factor assessment from retinal images,9 diabetes screening using chest x-rays,10 and identifying kidney disease from electrocardiograms.11

GenAI, the most recent development in AI, builds on deep learning and NLP to produce new content.12 Large language models (LLMs) such as ChatGPT (OpenAI), Claude (Anthropic), and Gemini (Google) are trained on vast datasets (contentiously, often without the consent of content owners13) and can generate responses, draft documentation, and interact with patients via chatbot interfaces. LLM outputs are based on predicting the most likely next word, and can produce remarkably fluent text. At the same time, GenAI models are prone to hallucinations (fabricating information), bias, and unpredictable behaviour.14,15 Despite these limitations, GenAI holds transformative potential for primary care.

Looking beyond current tools, the trend is moving towards more agentic AI.16 Agentic AI systems are not merely integrated tools following fixed workflows but are autonomous agents capable of pursuing high-level clinical goals by planning and executing multistep tasks. For example, an agent tasked with managing an individual newly diagnosed with hypertension might autonomously schedule appointments, order laboratory tests, draft educational materials, and even run subsequent machine learning-based risk prediction tools. Goal-directed autonomy introduces new challenges related to oversight, safety, medicolegal accountability, and clinical responsibility, all of which will require new governance models.

Even without agentic systems, many contemporary AI applications in health care already combine multiple techniques. For example, a health chatbot might use NLP to interpret text input, machine learning to classify risk, and GenAI to formulate a response. This functional overlap reflects the integrated, multifaceted nature of primary care itself. To navigate this rapidly evolving landscape, it is often more helpful to conceptualise AI in terms of clinical functions rather than technical categories.

Frameworks

Efforts to classify AI use cases in health care typically fall into four broad approaches. Some frameworks focus on technical methods—ie, categorising systems according to whether they rely on machine learning, NLP, or generative models.17,18 Others group AI applications by clinical function, such as diagnosis, triage, or prescribing.19 Another approach considers the stage of workflow—for example, providing support before, during, or after consultation.20 Some frameworks adopt a stakeholder perspective. Lin and colleagues’ seminal framework mapped AI applications across ten problem spaces aligned with patients, providers, and payers or employers.21

Although each framework has value, important limitations remain. Technical classifications of underlying technologies (ie, machine learning vs GenAI) are not particularly relevant in the consultation room. Function-based typologies can be overly broad and lack granularity, whereas workflow-based models struggle to accommodate tools spanning multiple stages of care and are often tied to specific organisational workflows that might not translate across contexts. Although stakeholder-based categories offer useful perspectives, we are concerned that the categories are difficult to map onto system-level decisions regarding governance, regulation, or procurement. Importantly, few models account for health system responsibilities as defined in widely accepted architectural frameworks.

To address these limitations, we mapped common AI use cases in primary care to the WHO digital health interventions framework (table).22 The framework, adopted by many national digital health strategies, categorises digital health interventions according to the functions they perform for four core user groups: clients, health workers, health system managers, and data services. Each function is coded and nested, enabling alignment with financing, supply chain, and governance systems. This system-level focus is particularly suitable for primary care, as implementation decisions should be considered within broader health system responsibilities. While populating the table with examples, we recognised that the term ‘intelligence’ is applied to a wide range of tools, from advanced deep learning applications to simple algorithms. A recent scoping review (preprint) reported that half of all papers on AI in primary care described tools in the ideation or development stages, with less than 10% reporting findings from mature tools deployed in clinical practice.23

Client Functions

1. Transmit targeted health information to client(s) based on health status or demographics

Potential AI use case examples

▪︎ AI-driven personalisation of SMSs for high-risk groups based on EHR data; behaviour-specific nudges; automated reminders for chronic disease reviews, vaccines, or screening, triggered by AI-generated risk scores

2. Transmit untargeted health information to an undefined population

Potential AI use case examples

▪︎ Generic health alerts during outbreaks via SMSs or social media bots

3. Peer group for clients

Potential AI use case examples

▪︎ AI-moderated patient forums and peer-matching platforms

4. Self-monitoring of health or diagnostic data by client

Potential AI use case examples

▪︎ Wearables for analysing heart rate, sleep, or glucose levels; behavioural nudges

5. Reporting of public health events by clients

Potential AI use case examples

▪︎ Symptom checkers logging structured self-reported data

6. Client look-up of health information

Potential AI use case examples

▪︎ 24/7 AI chatbots for health queries; FAQ-based LLM interfaces

Health worker Functions

1. Verify client unique identity

Potential AI use case examples

▪︎ Biometric ID verification; adaptable patient registration forms

2. Manage client’s structured clinical records

Potential AI use case examples

▪︎ Summarisation of EHRs; auto-linking of past notes and test results

3. Manage client’s unstructured clinical records

Potential AI use case examples

▪︎ Generate consultation summaries based on free text in the patient records and audio recordings of clinical consultations

4. Provide prompts and alerts according to protocol

Potential AI use case examples

▪︎ Prompts suggesting appropriate diagnoses, investigations, and treatment options

5. Screen clients by risk or other health status

Potential AI use case examples

▪︎ Triage support; differential diagnosis suggestions; point-of-care alerts (eg, cancer risk and allergies)

6. Consultations between remote client and health worker

Potential AI use case examples

▪︎ AI-assisted scheduling and routing; real-time transcription tools for teleconsults

7. Transmit non-routine health event alerts to health worker(s)

Potential AI use case examples

▪︎ Routing referrals and internal messages based on urgency or topic using NLP triage

8. Manage referrals between points of service within health sector

Potential AI use case examples

▪︎ Auto-generated referral letters with triaged urgency flags

9. Schedule health worker’s activities

Potential AI use case examples

▪︎ AI-driven prediction of no-show rates; clinician workload balancing

10. Provide training content to health worker(s)

Potential AI use case examples

▪︎ AI-driven personalised learning modules with feedback

11. Transmit or track prescription orders

Potential AI use case examples

▪︎ Automated medication reviews; dose adjustment suggestions; drug interaction alerts

12? Interpret digitally captured data∗

Potential AI use case examples

▪︎ AI-driven interpretation of ECGs, skin lesions, and retinal images

Health system manager Functions

1. Monitor performance of health worker(s)

Potential AI use case examples

▪︎ Predictive workforce planning based on service data

2. Manage inventory and distribution of health commodities

Potential AI use case examples

▪︎ Predictive workforce planning based on service data

3. Notification of public health events from point of diagnosis

Potential AI use case examples

▪︎ Predictive workforce planning based on service data

4? Equity monitoring and service gap analysis∗

Potential AI use case examples

▪︎ Predictive workforce planning based on service data

Data services Functions

1. Automated analysis of data to generate new information or predictions on future events

Potential AI use case examples

▪︎ NLP extraction from free-text clinical notes; AI dashboards for health trends

2. Classify disease codes or cause of mortality

Potential AI use case examples

▪︎ Auto-coding diagnoses, laboratory data, or correspondence using machine learning

3. Map location of health facilities/structures

Potential AI use case examples

▪︎ Geospatial machine learning to detect coverage gaps or optimise site placement

4. Data exchange across systems

Potential AI use case examples

▪︎ AI-based reconciliation of conflicting data formats or record duplicates

EHR=electronic health record

∗

Function not included in the WHO digital health intervention framework.

The WHO framework provides a scaffold for assessing current AI deployments, identifying areas that require oversight or evidence, and guiding opportunities for investments that might be most impactful. Additionally, this framework allows comparison of maturity across different domains (eg, triage vs documentation) and highlights gaps in current frameworks, such as the absence of explicit domains for diagnostic interpretation or equity monitoring. By grounding AI in the WHO framework, we emphasise that AI is not a single, monolithic solution but a collection of tools and functions spanning patient education, triage, documentation, workforce management, and more. In practice, these tools are layered and combined within workflows, often invisibly.

Despite these strengths, the WHO framework has some important limitations. The current iteration does not include a domain for tools that interpret digitally captured data or a domain focusing on equity monitoring. We have added the domain for tools interpreting digitally captured data under the health worker grouping and the domain for equity monitoring under the health system manager stakeholder grouping (table). Importantly, the framework does not account for approaches that link multiple tools, which will be the core value of emerging agentic systems.24 Mapping AI use cases onto the WHO digital health interventions framework highlights both the breadth of current activity and the gaps in existing taxonomies. However, classification alone cannot address the deeper uncertainties revealed by our near-future scenarios in Botswana and the UK.

Challenges

Primary care is a demanding environment, and our scenarios highlight several challenges that must be addressed to ensure that AI tools are implemented safely, fairly, and sustainably. Although many of these issues are not unique to primary care, the relational, decentralised, and data-fragmented nature of primary care implies that the risks and consequences of failure might be particularly acute. Drawing on existing evidence from the fields of AI, information and communication technology, and implementation science, we group these challenges into three broad domains: technical, operational, and ethical and social.

Technical challenges concern how AI systems are trained, validated, and deployed. Bias is a key concern: models built on incomplete or skewed data can systematically misclassify, underdiagnose, or exclude populations—especially those already marginalised.25 Bias is particularly problematic for predictive tools that guide triage or resource allocation, raising questions regarding how to ensure that training data are truly representative. Explainability is another limitation, especially for deep learning systems, as the outputs of these systems remain opaque even to developers.26 Without transparency, errors become more difficult to identify, challenge, or correct, prompting difficult questions on whether we should hold AI to a stricter standard of error than human clinicians and who bears liability. Closely related is robustness: many models perform well in curated test environments but fail when exposed to messy, real-world primary care data. Interoperability presents an additional hurdle: to function safely across the care continuum, AI should integrate with diverse health IT systems and datasets, which are often fragmented or poorly standardised.27

Ethical and social challenges are equally pressing. Accountability is a central issue: who is legally responsible for errors when clinical decisions are influenced or even generated by AI?28 The prospect of shifting tasks to technicians who collect data for AI triage raises further concerns regarding professional roles and tacit skills: what, if anything, do clinicians lose by foregoing the process of taking histories or writing their own notes? Over-reliance on AI risks de-skilling the workforce, creating a strategic vulnerability in the event of outages or cyber-attacks. Autonomy and consent also need safeguarding: patients must know when AI is being used in their care, have the option to opt out, and trust that sensitive data remain secure despite reliance on multiple tools from different organisations, including for-profit companies. Equity is another key concern: does implementing these systems advance a digital-only model of care that could entrench inequalities, or can they be deployed to close gaps? Provider experience is central—are AI prompts helpful or intrusive, and how do patients perceive clinicians’ reliance on algorithmic support? Finally, environmental sustainability is an ethical dimension that is often overlooked: what are the implications of embedding energy-intensive models into everyday practice?

Operational and governance challenges span funding, regulation, and workforce preparedness. Upfront costs are substantial, and health systems—particularly in low-resource settings—might lack the infrastructure to deploy tools safely. Regulatory frameworks are still evolving, and existing guidance is poorly aligned with the complexity of modern AI applications. Monitoring, auditability, and version control are rarely standardised, and procurement is often driven by vendor relationships or legacy pilots rather than systematic evaluation, increasing the risk of fragmentation and duplication.29 A major gap remains in workforce readiness. Although clinicians do not need to become programmers, they do require a new form of AI literacy: the ability to critically appraise algorithmic performance, understand validation contexts, and recognise when outputs are untrustworthy.30 Just as with the D-dimer blood test to screen for deep vein thrombosis, which is highly sensitive but only meaningful in low-risk contexts, the safe use of AI depends on nuanced understanding of its strengths and limitations. Unlike laboratory tests, AI can embed societal bias from its training data.31,32 Another important risk for clinicians to be aware of is ‘drift’, where AI models degrade silently as patient populations, clinical practices, or data patterns change relative to the original training dataset.33,34 Therefore, training clinicians is not a soft requirement but a central pillar of safety, enabling them to manage complex, dynamic, and potentially biased systems. Moreover, the ability to craft effective prompts for AI tools is becoming an essential skill for health-care professionals.35,36

Although we have grouped challenges and guardrails thematically, many issues are not neatly siloed. In practice, deeper tensions span technical, ethical, and operational domains, shaping how AI is implemented, regulated, and experienced. These tensions are summarised in panel 2.

Panel 2

Technical, ethical, and operational tensions in AI implementation

AI=artificial intelligence.

Local relevance versus global scale

AI tools that work well in one country or health-care system might fail elsewhere if not adapted; governance needs to allow local tailoring.

Automation versus clinical agency

Although automating tasks can improve efficiency, it should not undermine professional judgement or the trust patients place in their clinicians.

Innovation versus accountability

AI often reaches clinics faster than regulations can keep pace, creating grey areas regarding liability when errors occur.

Efficiency versus equity

Systems optimised for speed and throughput might overlook patients with complex needs or incomplete records.

Performance versus transparency

Complex AI models might achieve high accuracy; however, the black box nature of these models makes them difficult to interpret or adapt, particularly in multilingual or resource-constrained settings.

Tailoring

The first guardrail focuses on ensuring contextual fit. AI tools must be validated in the settings where they are used, using representative training data, patient populations, and staff. Models developed in US tertiary hospitals might fail in other settings unless adapted.

Responsibility

This guardrail focuses on clear medicolegal accountability for outcomes. Liability for AI-influenced decisions must be clearly defined. Human override and audit trails are non-negotiable, particularly when AI influences triage, diagnosis, or treatment. Risk assessments should take a long-term perspective and consider the impact on workforce displacement. Accountability frameworks should clarify the respective roles of clinicians, vendors, and regulators.

Universality

This guardrail focuses on inclusive design and equitable access for all populations. Evaluations should monitor subgroup performance and bias over time to ensure that marginalised groups are not disadvantaged. Data sources must be representative, and outcomes reported transparently. Patient-facing consent and literacy tools should be available in multiple languages and accessible formats.

Sustainability

This guardrail focuses on long-term viability. AI adoption should be financially, operationally, and environmentally sustainable. Planning should include model retraining, technical support, resilience against outages, and monitoring for model drift.

Transparency

The final guardrail focuses on clarity in design, data, and decision making. Outputs should be interpretable and actionable for clinicians, patients, and regulators. Recommendations should include adequate context to allow clinicians and patients to judge their reliability and respond safely when errors occur.

Conclusion

In the words of William Gibson, “the future is already here, it is just not evenly distributed”.50 Algorithmically driven high-risk alert pop-ups are already an (often irritating) part of daily practice in many high-income settings, alongside population health management tools, AI scribes, and numerous other technologies. The question is not whether AI will be used but whether it will reinforce or erode the core values of primary care: continuity, comprehensiveness, first-contact access, coordination, and person-centredness.

In this Viewpoint, we make three contributions. First, using near-future scenarios, we illustrate how AI might reshape front-line encounters in both high-resource and low-resource settings, raising questions of safety, accountability, equity, and patient experience. Second, we map AI applications to the WHO digital health interventions framework, offering a system-level structure that reveals both opportunities and blind spots. Third, we synthesise existing guidance and broader informatics and implementation literature into a novel TRUST framework; tailoring, responsibility, universality, sustainability, and transparency. These principles translate high-level concerns into operational criteria for evaluation and governance.

Our central message is simple; although the continued use of AI in primary care appears to be guaranteed, the value of these tools remains far from certain. Without deliberate governance, inclusive design, and active clinician and patient involvement, these tools risk fragmenting care, entrenching inequities, and displacing professional judgement. However, with appropriate guardrails, AI tools can potentially preserve the relational core of primary care while extending access to high-quality care.

Sources; The Lancet Digital Health

Volume 2, Issue 3, March 2026